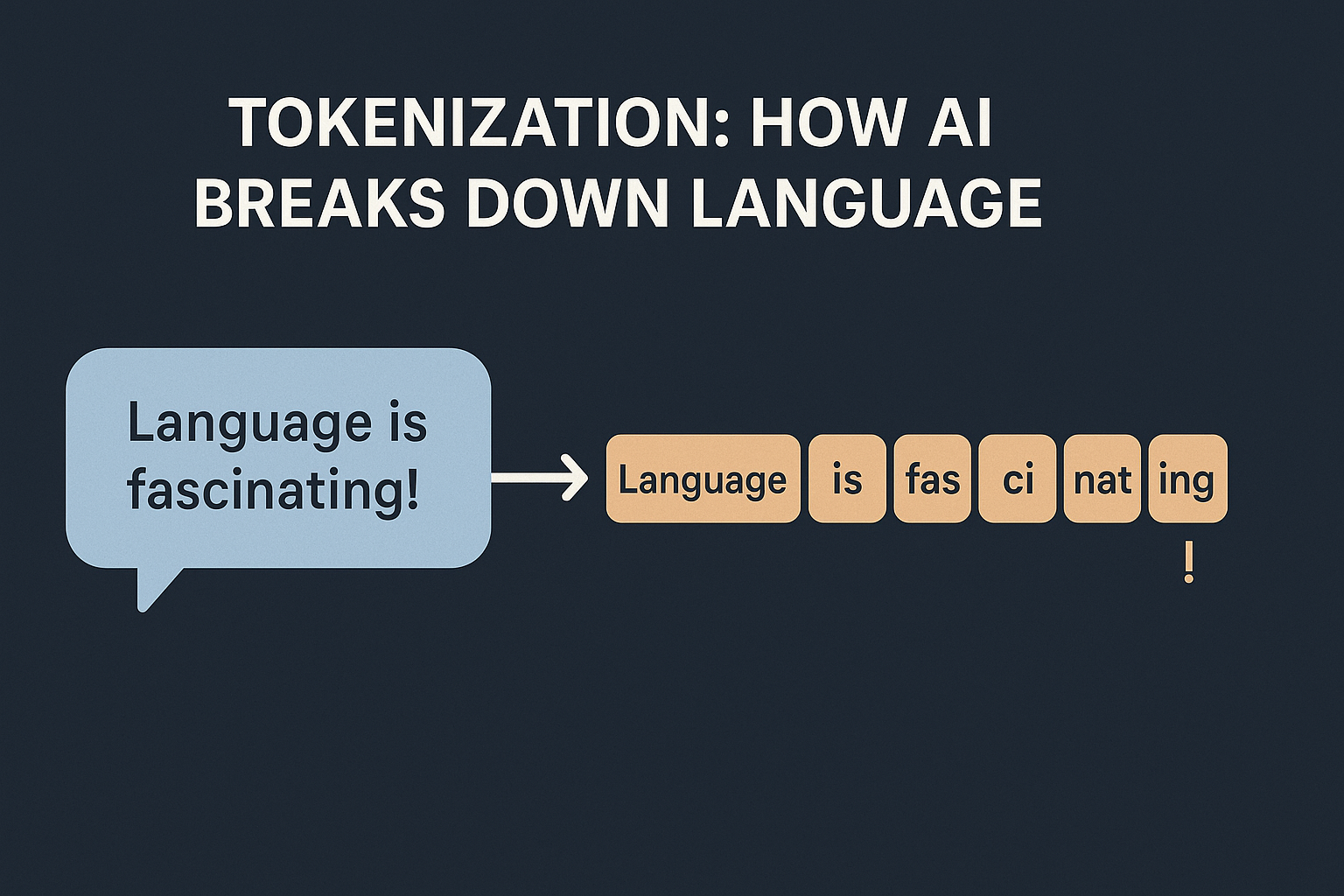

I am assuming the audience for this blog is programmers who may or may not have exposure to AI. Whenever we discuss about LLMs, we often hear the term “Tokenization“. In AI, it simply means how models break text into smaller pieces. Why can’t we just feed text to an AI? Machines don’t work with …

Have you ever wondered: I’ll take you on a simple, intuitive journey, building a tiny version of GPT from scratch that does exactly this — so you can walk away knowing precisely how it all works under the hood. We’ll do this by: How GPTs Learn and Store Knowledge Let’s start simple. Imagine you’re learning a new language. You …