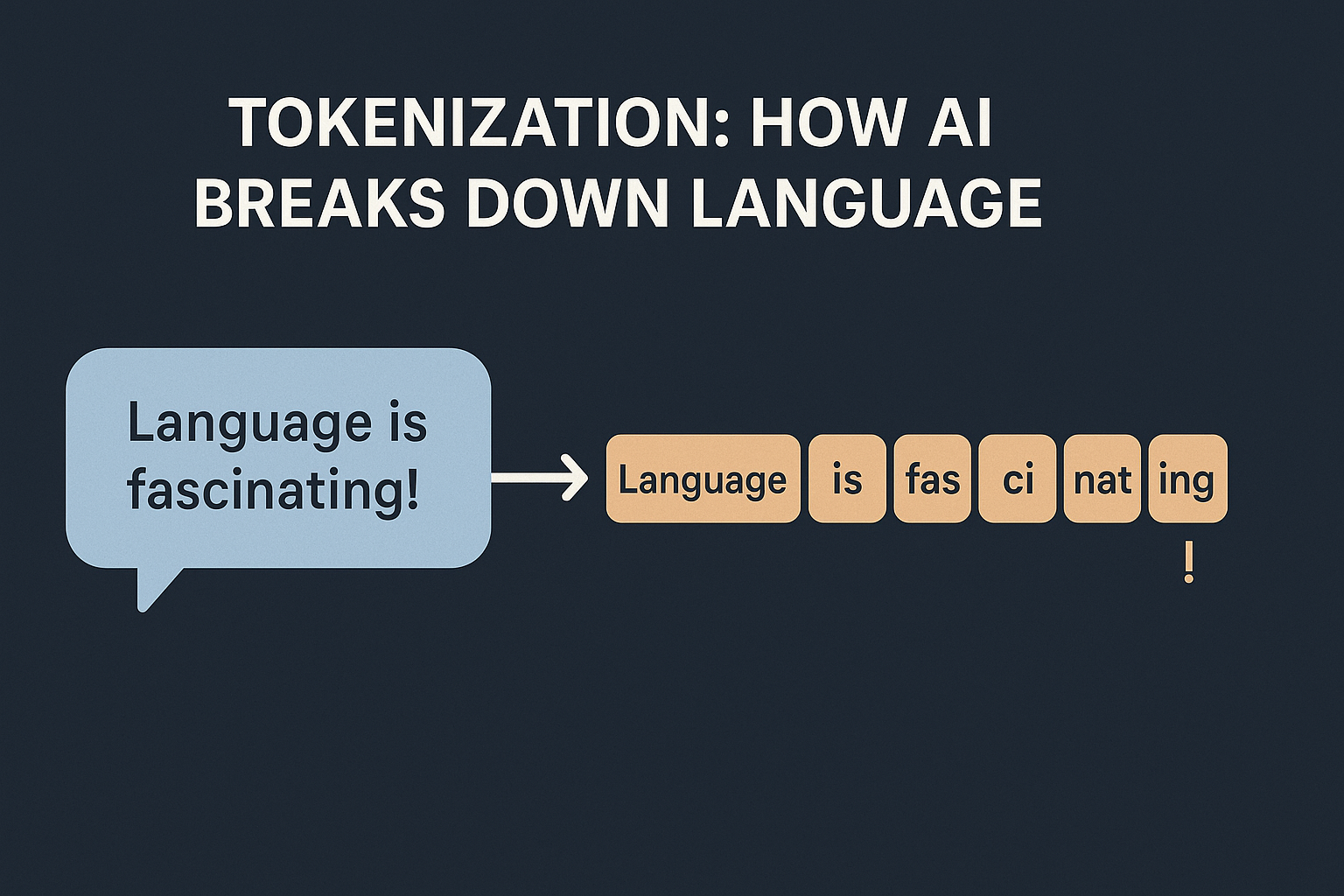

I am assuming the audience for this blog is programmers who may or may not have exposure to AI. Whenever we discuss about LLMs, we often hear the term “Tokenization“. In AI, it simply means how models break text into smaller pieces.

Why can’t we just feed text to an AI?

Machines don’t work with strings—they work with numbers. Before an AI model can learn or generate text, it needs the words transformed into sequences of numbers. Tokenization does that.

It splits text into tokens, then maps each token to a unique ID. These sequences of IDs become the inputs to the neural network.

What exactly is a token?

Depending on the tokenizer, a token could be:

- a whole word like “play”

- a part of word like “un”, “believ”, “able”

- or even punctuation like “.”

Most modern models like GPT use subword tokenization. This means they break words into smaller meaningful parts, so they can handle new words they have never seen.

A fun analogy

Imagine you want to send LEGO blocks in a mail. Instead of mailing the finished model (the text), you break it into pieces (tokens), number them, and send the list. The recipient rebuilds it using the instructions (the model’s learned weights).

Let’s check with a simple python script:

from transformers import AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("gpt2")

text = "Tokenization is fun!"

tokens = tokenizer.tokenize(text)

ids = tokenizer.convert_tokens_to_ids(tokens)

print(tokens)

print(ids)

Output:

['Token', 'ization', 'Ġis', 'Ġfun', '!']

[30642, 1634, 318, 1257, 0]Like me, you might have asked yourselves about what is this special character ‘Ġ’ which was not part of the text.

Those funny-looking tokens come from how certain tokenizers (especially Byte-Pair Encoding (BPE) used by GPT-2) handle spaces.

- The

Ġsymbol is a special marker that means “this token starts with a space.” - So

Ġisreally means" is"(space + “is”), andĠfunmeans" fun".

This is important because:

- It helps the tokenizer preserve word boundaries.

- It means “is” and “ is” are different tokens (which affects context).

You may say how can we prove these special characters does not affect in forming the text back with the token ids.

Let’s check:

print(tokenizer.convert_ids_to_tokens(ids))

print(tokenizer.decode(ids))Output:

['Token', 'ization', 'Ġis', 'Ġfun', '!']

Tokenization is fun!Great! Now we know the special characters is not a trouble to build back the sentence.

Why is this useful?

- Handles new words: “Unbelievable” can be split into “un”, “believ”, “able”, so the model is not lost.

- Smaller vocabularies: Instead of millions of whole words, it learns thousands of reusable pieces.

- Better learning: Like us picking up on roots and suffixes (“play”, “playing”, “playful”).

Analogy:

Think of a child reading:

- If they know “play” and see “playful,” they can guess the meaning by recognizing the root.

- Tokenizers do something similar: break things into subwords or chunks they’ve seen before.

Benefits:

- Handles new words by breaking them into parts.

- Keeps the vocabulary size manageable (so training isn’t impossible).

- Improves generalization.

What’s the fun if you cannot try. Correct? Check what happens when you run the following code snippet?

for sentence in ["Hello world!", "Tokenization is cool", "ChatGPTified"]:

print(f"{sentence} ->", tokenizer.tokenize(sentence))Notice how even made-up words break into smaller pieces.

Wrapping up

Tokenization is the quiet hero in Natural Language Processing, translating human language into neat block of IDs so that models can understand and create text.

Next time if you see bizarre tokens or IDs, remember – it’s just AI building sentences from LEGO bricks.